Advent of Code 2020 Day 10

So, I completed today's Advent of Code puzzle and decided that running my tests with coverage would be an interesting idea to see what kind of code coverage I have with this work so far (all examples and actual puzzle input has been run via Unit tests). But, this was all secondary to the actual puzzle today, which I spent over an hour trying to do part two WITHOUT recursion (because recursion can lead to computer memory/cpu stack issues), but later resorted to it because I wasn't able to figure out how to calculate that mathematically. I later found the following tweet doing this super simply (he's also explained it in a follow-up tweet)

I completed "Adapter Array" - Day 10 - Advent of Code 2020, kind of a tricky one, some #maths is needed https://t.co/FdMdLYGJmf #AdventOfCode #Python #adventofcode2020 #Coding #Fun pic created with #@carbon_app pic.twitter.com/hgF3A0xQBB

— Thomas Loock 🇩🇪 🇪🇺 (@Brotherluii) December 10, 2020

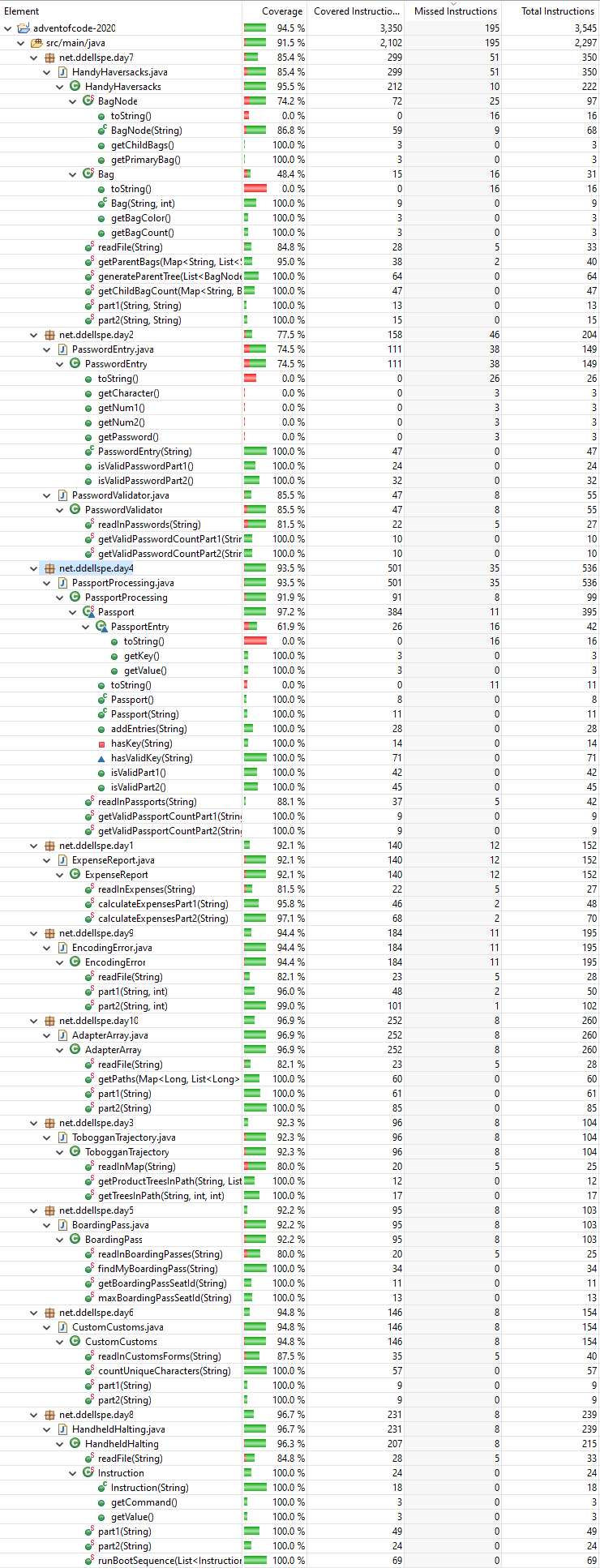

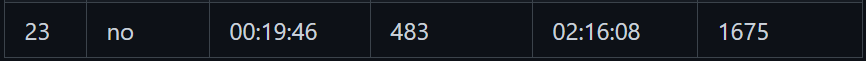

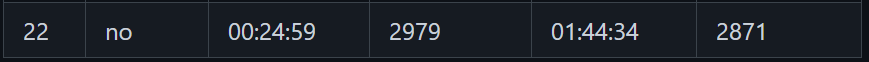

So, yeah...my solution sucks compared to that, but I think what I did learn was to sometimes take the methodical approach before trying to reinvent the wheel. Anyway, I want to write a bit more about code coverage, and maybe an issue with automated tools and java streams. So, below is a screenshot of the tool output for code coverage for my GitHub Repo as of my Day 10 submission.

First thing I'll say is that all of my "read" methods will be missing at least 1 path because I don't write tests to deal with the IOException that's possible due to the fact that it's reading files from the device, and if that file isn't readable or the file doesn't exist. That's practical tests vs. full tests. Another example for single methods typically include default returns (because if there's no return, the method doesn't compile) or in for loops where there's an expected break to successfully complete the puzzle. Last example is my data structures getters and toString methods, which are all really set up for debugging purposes only.

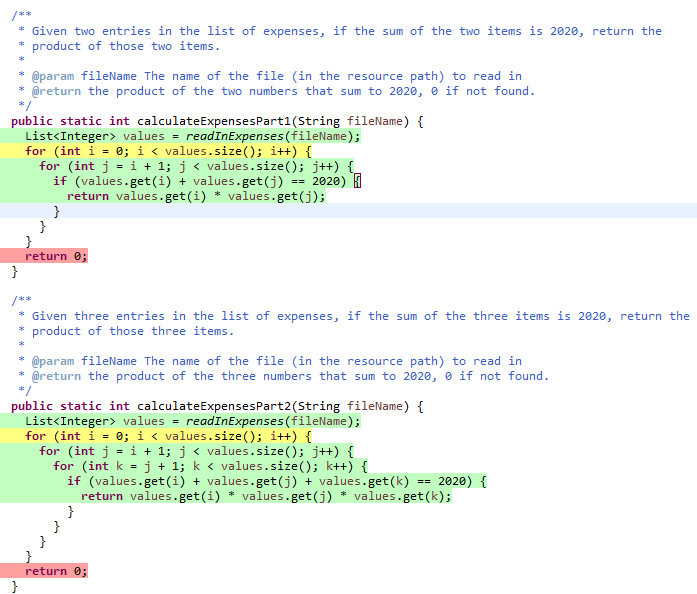

To extend out the second example (for loops that I expect to cut out early) I will screenshot the specific code I have for day 1 below.

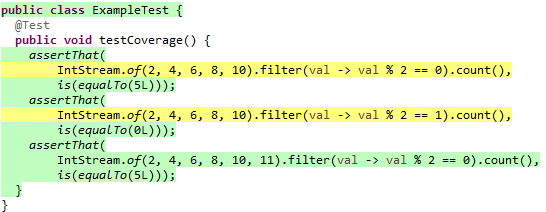

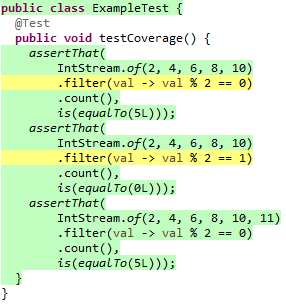

In both of these methods, the for loop is yellow, because it never reaches the ending loop condition i < values.size(), because we EXPECT to return from within the loop. In addition, the return 0 is red for the exact same reason, we expect the return to occur within the loop (and don't have an example otherwise in the given example data or input data). All of this code coverage stuff is interesting, and I kind of wonder if it actually works properly with streams, so I wanted to do some validations of that work. I have two examples that I plan on trying, the vast majority of examples I can think of include using filter within streams, because I know there were instances where my filter functions ended up just passing everything through, but to validate this, I'm going to do a filter where all attributes will be true, a filter where all attributes will be false, and a filter where there are both true and false instances to validate that paths are actually covered (at least within eclipse) for proper coverage.

assertThat(

IntStream.of(2, 4, 6, 8, 10).filter(val -> val % 2 == 0).count(),

is(equalTo(5L)));

assertThat(

IntStream.of(2, 4, 6, 8, 10).filter(val -> val % 2 == 1).count(),

is(equalTo(0L)));

assertThat(

IntStream.of(2, 4, 6, 8, 10, 11).filter(val -> val % 2 == 0).count(),

is(equalTo(5L)));

From this, I'd expect both the first and second examples to have a yellow highlight and the third to be green (we're testing both sides of the filter only on the third example). I'm going to code this up quick and run it through eclipse to validate.

So, it looks like coverage appears to be in line with what I'd expect to be viable, and by doing some additional line breaks, it looks like is specifically pinpoints the EXACT calls that are not fully tested.

I think this makes me more confident in my code coverage here, and while that doesn't matter generally, it's kind of helpful since at least things in other areas may rely on code coverage as a mandatory piece for contributing to open source. Again, none of this matters for Advent of Code, it's just something where I accidentally pressed a button, and that made me curious about how it works with streams.

If you want to join my Advent of Code leaderboard, feel free to join with the code: 699615-aae0e8af.

Member discussion